Please check the details in the manual ( ) or the vignette ( ). Load.svmlight: a function to load SVMlight data file into a sparse matrix Plot.tune: visualizes the results of parameter tuning Tune: a function to tune hyperparameters of statistical methods using a grid search over supplied parameter ranges Predict: a function to predict values based upon a model trained by svm in package Rgtsvm Svm: a function to train a support vector machine by the C-classfication method and epsilon regression on GPU Rgtsvm implement the following functions on GPU package(GTSVM)

Altering cost values for minor class or major class.Big matrix used in training or predicting data.Tuning parameters in kernel function or in SVM primary space or dual space (C, e).4 kernel functions (linear, polynomial, radial basis function and sigmoidal kernel).Binary classification, multiclass classification and epsilon regression.Supporting prediction using multiple GPU cards on single host for further speedup computation.įirstly our implementation is encapsulated in one R package which is backwardscompatible with the e1071 implementation.adding or altering some features in R code, such as cross-validation which is implemented in C/C++ in e1071 and has not been implemented in GT SVM.matching the SVM interface in the e1071 package so that R written around each implementation is exchangeable,.To enable the use of GT SVM without expertise in C/ C++, we implemented an R interface to GT SVM that combines the easeofuse of e1071 and the speed of the GT SVM GPU implementation. GT SVM is also implemented in C/C++ and provides simple functions that can make use of the package as a library. Sparse datasets through the use of a clustering technique.

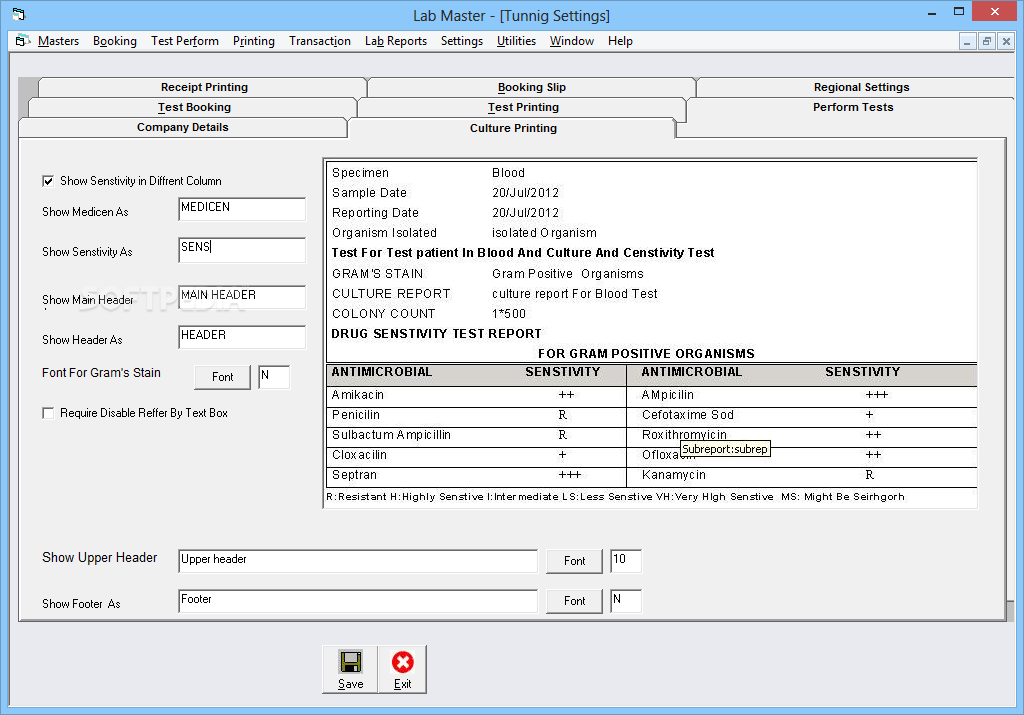

#LAB MASTER SUPERMIC FULL#

Among these SVM programs, GT SVM ( Cotter, Srebro, and Keshet 2011) takes full advantage of GPU architecture and efficiently handles GPU tools dedicated to SVMs have recently been developed and provide command line interface and binary classification, which functions are comparable to the e1071 package. GPUs are a massively parallel execution environment that provide many advantages when computing SVMs:ġst: a large number of independent threads build a highly parallel and fast computational engine Ģnd: using GPU Basic Linear Algebra Subroutines (CUBLAS) instead of conventional Intel Math Kernel Library (MKL) can speed up the application 3 to 5 times ģrd: kernel functions called for the huge samples will be more efficient on SIMD (Single Instruction Multiple Data) computer.

To improve the performance, we have recently implemented SVMs on a graphical processing unit (GPU). Although this implementation is widely used, it is not sufficiently fast to handle largescale classification or regression tasks. In the R community, many users use the e1071 package, which offers an interface to theĬ++ implementation of libsvm, featuring with C classification, epsilon regression, one class classification, eregression, v regression, cross validation, parameter tuning and four kernels (linear, polynomial, radialīasis function, and sigmoidal kernels formula). SVM is a popular and powerful machine learning method for classification, regression, and other learning tasks. The e1071 compatibility SVM package for GPU architecture based on the GTSVM software.